Auto-Muting Ads: The Raspberry Pi Experiment

I tried running ad detection on a Raspberry Pi. It was an adventure.

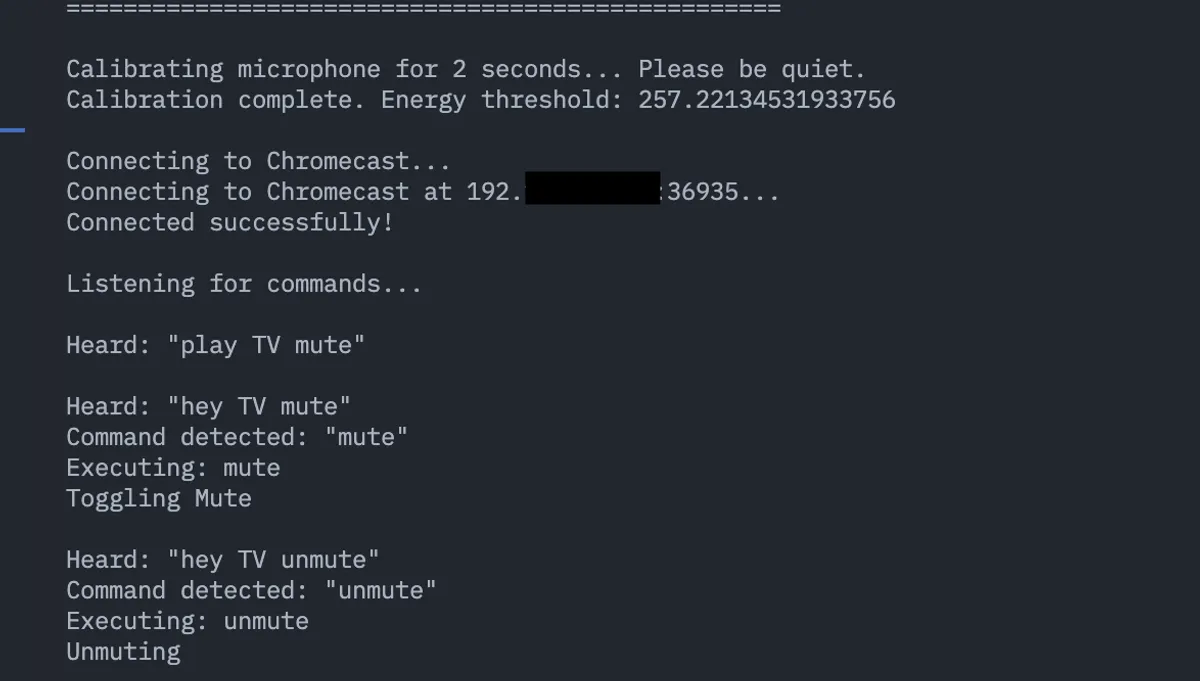

In my last post, I built a voice-controlled Chromecast so I could yell “mute” at my TV instead of hunting for the remote. It worked great, but I ended that post with a thought: what if I didn’t have to say anything at all? What if my setup could detect ads automatically and mute them for me?

So I went down that rabbit hole. Spoiler: it worked, but not on the hardware I originally planned.

The plan

The idea was simple:

- Capture the video output from my Chromecast using a cheap HDMI capture card

- Run OCR on each frame to look for ad indicators (like “Skip Ad” or “Ad 1 of 2”)

- When an ad is detected, automatically mute. When it ends, unmute.

For the OCR part, I went with EasyOCR. It’s a Python library that uses PyTorch under the hood and works surprisingly well for screen text. I built a detector that looks for specific patterns - things like “skip ad”, “ad in X seconds”, or just a standalone “Ad” label in the corner.

The detection logic turned out to be trickier than expected. You can’t just look for the word “ad” because it shows up everywhere - “download”, “read”, “added”. I had to build a list of false positives and use word boundary matching to avoid muting during normal content.

Enter the Raspberry Pi

I had a Raspberry Pi 4 sitting around, and it seemed like the perfect always-on device for this. Small, low power, quiet. I could stick it behind my TV and forget about it.

Getting things set up was… an experience.

First, PyTorch. I installed the latest version and tried to run my script. Immediately hit this:

Illegal instruction (core dumped)Not exactly helpful. After some digging, I found a GitHub issue describing the exact problem. Turns out PyTorch 2.6+ uses some ARM64 atomic instructions (ldaddal) that the Raspberry Pi 4’s Cortex-A72 processor doesn’t support. The Pi 5 has these instructions, but the Pi 4 doesn’t.

The fix was simple: downgrade to PyTorch 2.5.1. That version works fine on the Pi 4.

A small hiccup

With PyTorch sorted, I ran my script and… it still failed. The frame capture kept erroring out.

Turns out the script was trying to save the captured frame to a samples directory that didn’t exist on the Pi. I had that directory on my Mac but never pushed it to git. Quick fix: save the temp frame to /tmp instead. Problem solved.

The moment of truth

Everything was finally set up. I ran the script, held my breath, and… it worked. Kind of.

The detection was accurate. It correctly identified when an ad was playing. The muting worked. But there was one major problem.

It was painfully slow.

Each frame took several seconds to process. By the time the Pi detected an ad, I’d already been listening to it for a while. EasyOCR is doing a lot under the hood - running a neural network to find text regions, then another to recognize the characters. On my Mac with its M-series chip, this takes maybe 200-300ms. On the Pi’s ARM CPU? More like 3-4 seconds per frame.

I tried some optimizations:

- Lowered the capture resolution from 1080p to 720p

- Reduced the image size before running OCR

- Only scanned specific regions of the frame where ad indicators typically appear (corners and edges)

It helped, but not enough. The Pi just doesn’t have the compute power for real-time-ish OCR.

Time for a new plan

After watching my Pi struggle through a few ad breaks, I decided to try different hardware. The issue isn’t the code - it’s the lack of GPU acceleration. EasyOCR can use CUDA if you have an NVIDIA GPU, but the Pi doesn’t have one.

Enter the Jetson.

NVIDIA’s Jetson boards are basically Raspberry Pi-sized computers with actual GPUs built in. They’re designed exactly for this kind of edge AI workload. The Orin Nano has 1024 CUDA cores and can run PyTorch with full GPU acceleration.

I’m seriously considering picking one up. The code should work with minimal changes since it already has gpu=True flags in place.

What I learned

Sometimes the cheapest hardware isn’t the right hardware. The Pi is great for a lot of things, but running neural networks isn’t one of them. If I was doing simple image processing or rule-based detection, it would be fine. But the moment you bring in deep learning models, you need something with more power.

That said, the Pi experiment wasn’t wasted time. I found bugs that would have bitten me later anyway, and I have a working ad detection system - I just need to run it on hardware that can keep up.

If I end up getting a Jetson, I’ll write a follow-up on whether it finally delivers the ad-free existence I’m chasing.